I Went Deeper Down the Quantum Rabbit Hole. The Industry Is Full of Shit.

Read the original thread on X

Read the original thread on X

A follow-up. [Part 1 here.] The more I read, the less worried I got — and the angrier.

TL;DR

- The quantum computing industry has received $40+ billion in government funding and produced under $1 billion in revenue. Insiders are selling stock at a 216:1 ratio. The hype is not accidental.

- Expert technology predictions are wrong 86% of the time for long-term forecasts. Domain insiders are systematically ~20 years more optimistic than outsiders. Fusion has been “20 years away” for 50 years.

- The people who actually build and break cryptographic systems — Green, Schneier, Thaler, Bernstein — are far more skeptical than the people selling quantum hardware. The father of post-quantum cryptography says: “I hope quantum computing somehow fails.”

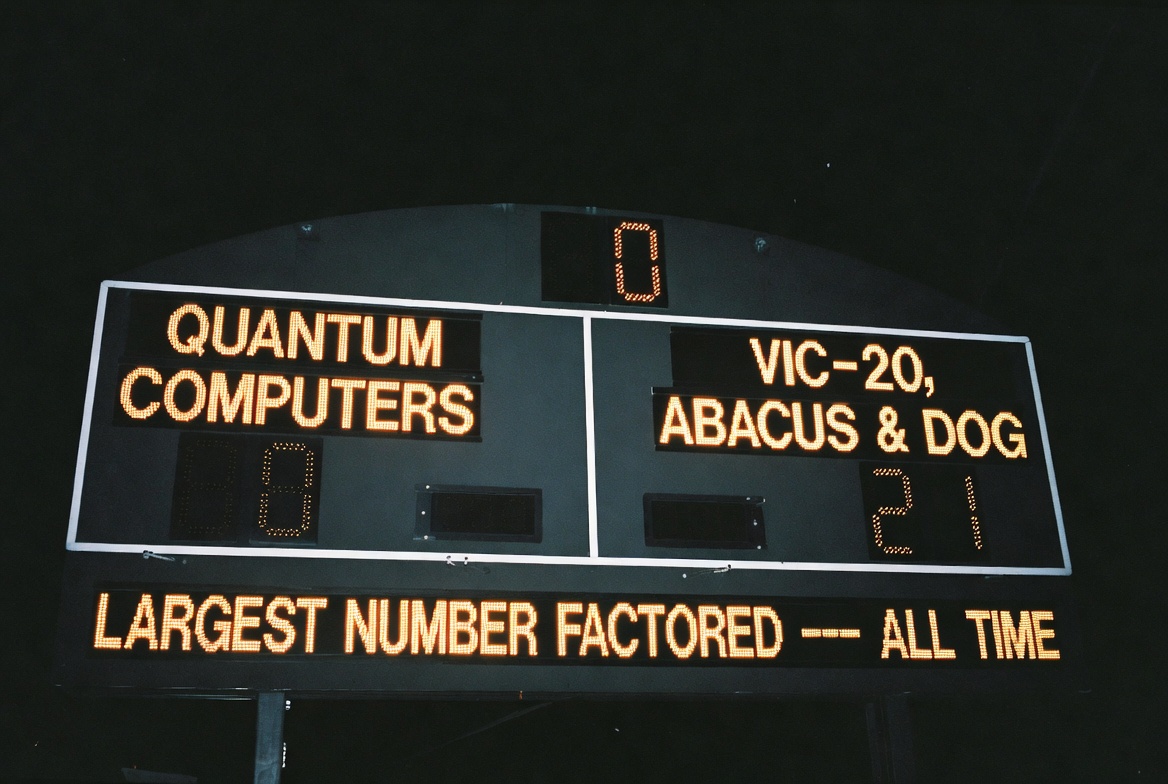

- The largest number ever factored by a quantum computer is 21. A 1981 Commodore VIC-20 can do the same thing. Breaking Bitcoin requires factoring a 1,300-digit number.

- Bitcoin should still upgrade its cryptography — not because quantum computers are coming, but because relying on a single cryptographic assumption for a $2 trillion network is bad engineering. Classical math has broken more crypto than quantum computers ever have.

Why a Part 2

After publishing Part 1, I got two kinds of responses. The “Bitcoin is doomed” crowd told me I was too dismissive. The “nothing to see here” crowd told me I was too worried.

Then I kept reading. Another 40 papers. The Cloudflare roadmap. Alex Pruden’s hardware progress thread. Matthew Green’s commentary. Tim Palmer’s PNAS paper on quantum mechanics itself. Insider trading data. Prediction market odds. Technology forecasting research going back decades.

And something shifted. Not toward panic — further away from it. The deeper I went into the actual evidence, the more the quantum threat looked like a story told primarily by people with a financial interest in you believing it.

That doesn’t mean it’s fake. It means the timeline is almost certainly bullshit, the urgency is manufactured, and the real reason to upgrade Bitcoin’s cryptography has nothing to do with quantum computers.

Let me show you what I found.

Part 1: The Base Rate Problem (Or, Why Expert Predictions Are Garbage)

Before I give you anyone’s estimate for when quantum computers will break cryptography, you need to understand how reliable expert predictions are. The answer: not very.

A study of 300+ technology forecasts published in PNAS found that long-term predictions (11-30 years) have a 14% success rate. Not 50%. Not 30%. Fourteen percent. Six out of seven expert predictions for transformative technologies are wrong.

Philip Tetlock ran a 20-year study tracking over 30,000 expert forecasts. The average expert performed at the level of random guessing. He called it the “dart-throwing chimpanzee” problem — you’d get similar accuracy from a chimp throwing darts at a board of possibilities.

It gets worse. Research from AI Impacts found that people inside a field are systematically ~20 years more optimistic about their technology’s timeline than outsiders looking at the same evidence. The mechanism is obvious: the people most motivated to work on quantum computing are the ones who believe it will succeed soon. That’s selection bias baked into every expert survey.

Fusion energy has been “20 years away” for 50 years. A formal academic review in Springer tracked three decades of fusion predictions. Over 30 calendar years, the estimated distance to commercial fusion shrank by a net 1.5 years. In one decade, it actually moved backward — experts said it was further away than they’d said ten years prior.

Autonomous driving predictions from 2015-2016 targeted 2019-2021. Lyft said majority of rides would be autonomous “within five years.” Ford promised steering-wheel-free robotaxis. Level 5 is still not here in 2026, 10+ years late and counting.

Every single one of these predictions was made by smart people with access to the best data available. They were all wrong. Not by a little. By decades.

Now here are the expert estimates for quantum computers breaking cryptography.

Part 2: What the Experts Actually Say (Read Between the Lines)

Watch who says what — and what they sell.

Adam Back (Hashcash inventor, cited in the Bitcoin whitepaper): 20-40 years.

Jensen Huang (NVIDIA CEO): Initially said 15-30 years, January 2025. This was honest. The market wiped $8 billion off quantum computing stocks in a single day. Within 60 days, Huang walked it back, hosting a “Quantum Day” where he let QC CEOs explain why he was wrong. Draw your own conclusions about which estimate he actually believed and which one the industry needed him to say.

Bruce Schneier (cryptographer): “I am also skeptical that we are going to see useful quantum computers anytime soon.” Estimates ~2039, and frames it: “We don’t know if it’s ‘land a person on the surface of the moon’ hard, or ‘land a person on the surface of the sun’ hard.”

Matthew Green (Johns Hopkins, one of the most respected cryptographers alive): “Apple even spent time adding post-quantum encryption to its iMessage protocol — this means Apple users are now safe from quantum computers that don’t exist.” On Google’s 2026 paper: “It’s an algorithm for a computer that’s at best years away from existing, so I’d say publish.”

Justin Thaler (Georgetown CS professor, a16z crypto research partner): “Timelines for a quantum computer powerful enough to break modern cryptography are being widely overstated, and that exaggeration is driving costly and in some cases misguided security decisions.” His bottom line: “Implementation security, not quantum computing, is the dominant cryptographic risk for years to come.”

Scott Aaronson (UT Austin, leading quantum complexity theorist): Refuses to give a timeline. Notes breaking RSA would require “someone investing $100 billion.” He’s probably the most intellectually honest person in the field — and he keeps his personal wealth in index funds, not quantum stocks.

Craig Gidney (Google Quantum AI): 10% chance by 2030. Also notes he does NOT see another 10x improvement in qubit estimates under current assumptions — the optimization curve may be flattening.

Prediction markets (Manifold, 2026): RSA-2048 broken before 2030: ~20%. SHA-256 broken: 8%. Consumer quantum products: near-zero.

Daniel Bernstein — the person who literally invented the term “post-quantum cryptography” in 2003 and co-designed one of four NIST PQC winners: “I hope quantum computing somehow fails.”

Notice the pattern. The people who build and break cryptographic systems for a living — Green, Schneier, Thaler, Bernstein — are systematically more skeptical than the people who build quantum computers. This is not a coincidence. It’s the selection bias from the PNAS study made flesh.

Part 3: Follow the Money

Here’s where it gets ugly.

The quantum computing industry has received over $40 billion in government funding globally, plus billions in private capital. Total 2024 industry revenue: under $1 billion. That’s a ratio that would embarrass a Ponzi scheme.

Quantum computing stocks trade at price-to-sales ratios that make the dot-com bubble look conservative. Rigetti: 360:1. IonQ: 188:1. For context, at the peak of the dot-com bubble, Amazon was at 31:1 and eBay at 144:1.

And here’s the stat that tells you what insiders actually think: over five years, executives at IonQ, Rigetti, and D-Wave collectively sold $930 million in stock while buying $4.3 million. That’s a 216:1 sell-to-buy ratio. The Rigetti CEO personally sold $11 million. D-Wave’s entire C-suite is selling. Not a single insider at Rigetti, D-Wave, or QCI purchased a share in the trailing year.

Let that sit. The people running quantum computing companies are dumping stock as fast as they legally can while telling you the future is almost here.

Every single vendor roadmap has been revised backward. IBM promised 1 million qubits by 2030 — now targets 100,000 by 2033. PsiQuantum promised 1 million qubits by 2025 — nothing materialized. Google quietly dropped its million-qubit target entirely. BCG explicitly admitted their near-term NISQ predictions were “overly optimistic.”

Quantum Predictions vs. Reality

| Who | What They Predicted | When Predicted | Target Date | What Actually Happened | How Far Off |

|---|---|---|---|---|---|

| PsiQuantum | 1 million qubits | 2020 | 2025 | Zero publicly demonstrated product progression by 2024. Shifted roadmap to 2027, then likely 2028-2029. As of 2026, Omega chipset announced but no million-qubit system. | 4+ years (and counting) |

| IBM | 1 million qubits | 2020 | 2030 | Revised to 100,000 qubits by 2033. Pivoted strategy entirely — abandoned monolithic scaling (Condor) in favor of modular approach (Heron). The “1 million” target quietly disappeared. | 3+ years (target moved, scope reduced 10x) |

| 1 million error-corrected qubits | ~2020 | ~2029 | Now targeting 100-1,000 logical qubits by 2030. The million-qubit claim evaporated. Current Willow chip: 105 qubits. | Indefinite (goal quietly dropped) | |

| D-Wave | 1,024-qubit machine | 2007 | Mid-2008 | Did not deliver. D-Wave One (128 qubits) arrived in 2011. 1,000+ qubits not achieved until ~2015 (D-Wave 2X). | 7 years |

| D-Wave | Quantum speedup over classical | 2011-2013 | Immediate | Troyer & Lidar found “no speed increase compared to classical computers.” McGeoch’s 3,600x claim was revised to ~30x (equivalent to ~30 classical cores). | Not achieved (disputed to this day) |

| Quantum supremacy (Sycamore) — “10,000 years classically” | Oct 2019 | Achieved | IBM immediately countered: 2.5 days classically. By 2022, Chinese researchers simulated it on a classical supercomputer. The “10,000 years” was off by orders of magnitude. | Supremacy claim eroded | |

| D-Wave | Quantum supremacy on useful problem | Mar 2025 | Achieved | Within days, Flatiron Institute solved the same problem classically with 4 GPUs in 3 days. EPFL published similar classical results. | Claim disputed immediately |

| IBM | NISQ utility demonstrated (127-qubit experiment) | 2023 | Achieved | Claims “quickly refuted by further studies that simulated IBM’s experiment on a conventional laptop.” | Claim refuted |

| Jensen Huang (Nvidia) | Useful QC is “15-30 years away,” 20 years most likely | Jan 2025 | 2040-2055 | Walked it back 2 months later at GTC, saying “my comments came out wrong.” Hosted “Quantum Day” to let QC CEOs explain why he was wrong. | Self-corrected in 60 days |

| BCG (consultants) | Near-term NISQ value creation | 2021 | 2021-2025 | BCG explicitly admitted “assumptions for near-term value creation in the NISQ era have proved optimistic and must be revised.” | Wrong by their own admission |

| Honeywell/Quantinuum | 10x quantum volume increase per year for 5 years | Mar 2020 | 2020-2025 | Actually delivered on this (QV 128 to 2M+). Rare example of a prediction that was met or exceeded. | Prediction met (notable exception) |

| IonQ | Commercially available interconnected system | 2024-2025 | 2028 | Roadmap: 2M physical qubits / 80K logical qubits by 2030. Analysts warn scaling from ~50-100 qubits to 10K+ in 2-3 years is “unprecedented.” | TBD (high skepticism) |

| Rigetti | Commercial quantum advantage | ~2022 | ~2025 | Timeline slipped to 2027, now looks like 2029. Delayed 108-qubit system deployment due to coupling issues. $201M net loss in 2024. | 4+ years (and counting) |

| Field consensus | RSA-2048 broken by quantum computer | Various (2010s) | ~2030 | Old estimate: needed 20M physical qubits (decades away). New Gidney estimate (2025): <1M qubits, under a week. Hardware not there yet. Still no CRQC. | TBD (goalposts moved both ways) |

| Geordie Rose (D-Wave) | Qubit doubling every 18 months (“Rose’s Law”) | ~2003 | Ongoing | For D-Wave’s annealing qubits specifically, this roughly held. But annealing qubits are not equivalent to universal gate-model qubits — a distinction often blurred. | Technically met, practically misleading |

https://x.com/nvk/status/2041632785928994816

The DARPA program manager for quantum computing calls himself the “Chief Quantum Skeptic” and openly questions whether fault-tolerant quantum computing is even possible.

And then there’s the goal-post moving. The industry started with “quantum supremacy.” When that was challenged (IBM disputed Google’s claim within days; Chinese researchers simulated it classically within years), it became “quantum advantage.” When that proved elusive, it became “quantum utility.” When IBM’s quantum utility claims were “quickly refuted by further studies that simulated IBM’s experiment on a conventional laptop,” it became “quantum readiness.” Each rebrand is a retreat disguised as progress.

QuEra’s own Chief Commercial Officer puts it without the spin: “If someone says quantum computers are commercially useful today, I say I want to have what they’re having.”

Part 4: The Scoreboard

Let’s look at what quantum computers have actually done.

The largest number ever factored by Shor’s algorithm on real quantum hardware is 21. That’s 3 times 7. Done in 2012. An attempt at 35 failed.

In 2025, cryptographers Peter Gutmann and Stephan Neuhaus published a paper in which they replicated every quantum factoring record on a 1981 Commodore VIC-20, an abacus, and a trained dog. The dog was slowest. The paper is titled “Replication of Quantum Factorisation Records with a VIC-20, an Abacus, and a Dog.” It’s published on the IACR ePrint Archive. It’s not a joke — or rather, the joke is the scoreboard.

Now, an important distinction. The factoring records above apply to RSA, not Bitcoin. Bitcoin uses Elliptic Curve Cryptography (secp256k1), which Shor’s algorithm also breaks — but through the Elliptic Curve Discrete Logarithm Problem (ECDLP), not integer factoring. Solving Q = dG for d on a 256-bit curve is a different computational problem. And the quantum scoreboard for ECDLP is even emptier than factoring — no quantum computer has solved a meaningful elliptic curve discrete log instance. Ever. The difficulty is comparable to factoring a ~3,000-bit RSA key, but the point stands on its own: both problems are equally out of reach for any quantum hardware that exists or is on any credible roadmap.

The entire urgency argument rests on a hidden assumption that Scott Aaronson states explicitly: once Shor’s algorithm runs on a fault-tolerant quantum computer — even for small numbers — scaling to RSA and ECC is “merely a matter of scaling up.” That “merely” is doing an extraordinary amount of work. If there’s a hard ceiling somewhere between 10 and 1,200 logical qubits — and several serious physicists argue there is — then the urgency collapses entirely.

No quantum computer has ever solved a real-world problem faster or cheaper than a classical computer. Not once. In over 30 years.

Part 5: Why It Might Be Never

I’m not being flippant. Serious physicists and mathematicians — people with named theorems and Royal Society fellowships — argue that fault-tolerant quantum computing at cryptographic scale faces barriers that may be fundamental.

Leonid Levin (co-inventor of NP-completeness): “Quantum amplitudes need accuracy to multi-hundredth decimals, but we have never seen a physical law valid to over a dozen decimals.” Quantum mechanics has been verified to ~12 decimal places. Shor’s algorithm needs hundreds. That gap has never been tested.

Michel Dyakonov (theoretical physicist): A 1,000-qubit system requires controlling ~10^300 continuous parameters simultaneously — more than the number of subatomic particles in the universe. His assessment: “No, never.”

Gil Kalai (Hebrew University, mathematician): Quantum noise exhibits irreducible correlations that worsen with system complexity. Error correction at scale is fundamentally impossible — not merely hard. His conjectures are unproven after 20 years, but his experimental predictions have been partially wrong, which cuts both ways.

Tim Palmer (Oxford, Fellow of the Royal Society): His Rational Quantum Mechanics framework, published in PNAS in March 2026, argues that gravity discretizes quantum mechanics itself. Entanglement hits a hard ceiling at roughly 200-1,000 qubits. Beyond that, Shor’s algorithm loses its exponential advantage. Palmer isn’t arguing that engineering is too hard. He’s arguing that quantum mechanics doesn’t work the way we think it does at scale. His paper was reviewed by Nicolas Gisin and three other respected quantum foundations researchers. Aaronson expects near-term experiments to refute it. The prediction is concrete and falsifiable within five years.

And there’s an intuitive version of this argument that’s harder to shake. Grover’s algorithm — the one that’s supposed to threaten Bitcoin mining — claims you can search an unsorted list of N items in O(square root of N) time. There is no classical analogy for this. You cannot search faster than you can look. It’s an all-or-nothing prediction of quantum mechanics: either nature gives you exactly this speedup, or it doesn’t. And what that speedup produces in practice is the absurd result we covered in Part 1: quantum mining would require the Sun’s energy output to do what a $2,000 ASIC does better. When a theory predicts you need a star’s energy to underperform a chip that plugs into a wall outlet, maybe the theory doesn’t describe what happens at the scales required.

The quantum computing industry has 6-9 competing hardware architectures — superconducting, trapped ion, neutral atom, photonic, topological, and more. In technology history, this is called the “era of ferment”: the automobile industry had 275 manufacturers with three different engine types before consolidation. It’s normal. It’s also the phase where observers mistake activity for progress.

The fusion parallel is the most precise. Thirty years of predictions. Net progress toward the goal: 1.5 years. One decade moved backward.

Part 6: The Real Worries (And They’re Not What You Think)

I don’t want to dismiss everything. That’s the other side’s mistake. Here’s what actually keeps me up at night:

The exposed surface is growing. Taproot — Bitcoin’s newest address format — exposes tweaked public keys on-chain. Bitcoin’s most recent upgrade made things worse for quantum resistance. About 6.26 million BTC have exposed public keys. That’s 30-35% of the supply. It’s not a reason to panic. It is a reason to prepare.

Bitcoin’s governance is slow. No soft fork has activated since November 2021. Cloudflare already has 65% of its traffic post-quantum encrypted. Bitcoin is at 0%. The gap isn’t technical — it’s governance. But slow isn’t the same as stuck. BIP-360 is in draft status with a testnet running. The work has started.

Migration is a race condition. To move Bitcoin to a quantum-resistant address, you have to spend from the old one — exposing your public key in the process. Solutions exist (commit-delay-reveal protocols, pre-emptive poison pills), but they only work for Bitcoin whose public key is still hidden behind a hash. For the ~6.26 million BTC with already-exposed keys, including Satoshi’s coins, there is no post-disaster fix.

Satoshi’s coins can’t be moved. ~1.1 million BTC in P2PK addresses with exposed keys. No one holds the keys — or Satoshi is absent. There is no clean solution.

Those are real engineering problems. They deserve serious attention. But notice what’s NOT on my worry list: “quantum computers will break Bitcoin in 5 years.” The evidence doesn’t support that timeline. The evidence barely supports that timeline in 20 years. And the evidence for “never” is stronger than most people realize.

Part 7: The Actual Reason to Upgrade (It’s Not Quantum)

Here’s the part that matters most, and it has nothing to do with quantum computers.

Cryptographic systems broken by quantum computers to date: zero.

Numbers factored by quantum computers: 21. Numbers factored by a Commodore VIC-20 from 1981: also 21, and larger.

Cryptographic systems broken by classical mathematics: too many to count. DES, MD5, SHA-1, RC4, SIKE, the Enigma machine — all fell to clever math, not quantum hardware. SIKE was a NIST post-quantum finalist that got destroyed in 2022 by a single researcher on a laptop in an hour.

secp256k1 — the elliptic curve Bitcoin uses — could fall to a mathematical breakthrough tomorrow. No quantum computer needed. Just a sufficiently clever number theorist with a new insight into the discrete logarithm problem. Daniel Bernstein and Tanja Lange’s SafeCurves project rates secp256k1 as failing 4 of 11 safety criteria — not because the math is broken, but because the curve makes it unnecessarily hard to write secure implementations. Bitcoin Core’s libsecp256k1 library heroically mitigates these risks through constant-time algorithms and formal verification. But compiler updates have repeatedly broken these guarantees, requiring emergency patches.

This is the actual reason Bitcoin should adopt alternate cryptographic schemes. Not because quantum computers are coming — they might never arrive. But because relying on a single cryptographic assumption for a $2 trillion network is exactly the kind of risk that serious engineering addresses proactively.

The quantum FUD is a distraction from this quieter, more real concern. And ironically, the work being done to prepare for quantum threats — BIP-360, post-quantum signatures, hash-based alternatives — also addresses the classical cryptanalysis risk. The right thing is being done, for the wrong reason. That’s fine, as long as it gets done.

What You Should Do

If you’re a holder: stop reusing addresses. Watch for BIP-360. Keep long-term holdings in addresses you’ve never spent from. Be cautious with your xpub — in a post-quantum world, it’s as sensitive as your seed phrase. And ignore the headlines. The actual papers are more interesting and less scary.

If you’re a developer: BIP-360 needs reviewers. The testnet is running. Start the governance conversation about legacy UTXOs. Research commit-delay-reveal and poison-pill emergency protocols. The 7-year timeline estimate should compress.

If you just read a scary headline: remember that 59% of shared links are never clicked. The headline is designed to make you feel something. The paper underneath says something different. Go read the paper.

The Honest Conclusion

Here’s where I ended up after 200+ hours and 85 research documents:

The quantum computing industry is running one of the most successful marketing campaigns in the history of technology. Forty billion dollars in, under a billion out, insiders selling 216:1, every roadmap revised backward, every success criterion relaxed, and still the headlines say “almost there.”

The underlying physics might be real. The engineering might eventually work. But the timelines being sold to policymakers, investors, and the public are not based on evidence. They are based on the financial needs of an industry that has burned through more capital than it has produced value, by a ratio that should embarrass everyone involved.

Bitcoin’s cryptography will need upgrading eventually. Not because quantum computers are five years away — they almost certainly aren’t. Not because the threat is imminent — the prediction markets say ~20% chance by 2030, and prediction markets are more reliable than experts. But because classical cryptanalysis has been killing cryptographic systems for as long as they’ve existed, because secp256k1 has known weaknesses that are mitigated heroically rather than resolved structurally, and because a $2 trillion network deserves defense in depth.

The house isn’t on fire. The house may never catch fire from this direction. But the smoke alarm salesmen are getting very rich telling you it might.

Sources: 85 research documents spanning quantum computing resource estimates, Bitcoin vulnerability analysis, technology prediction accuracy (PNAS, Tetlock), vendor incentive analysis, and cryptographer commentary. Key sources: Google Quantum AI (2026), Palmer RaQM (PNAS 2026), Fye et al. forecasting accuracy, Gutmann & Neuhaus VIC-20 replication (IACR 2025), Matthew Green (JHU), Justin Thaler (Georgetown/a16z), Bruce Schneier, BIP-360, SafeCurves, Manifold Markets, and QC industry insider trading data.